DeepScope

Real-Time Deepfake Detection Using Biological Plausibility Metrics

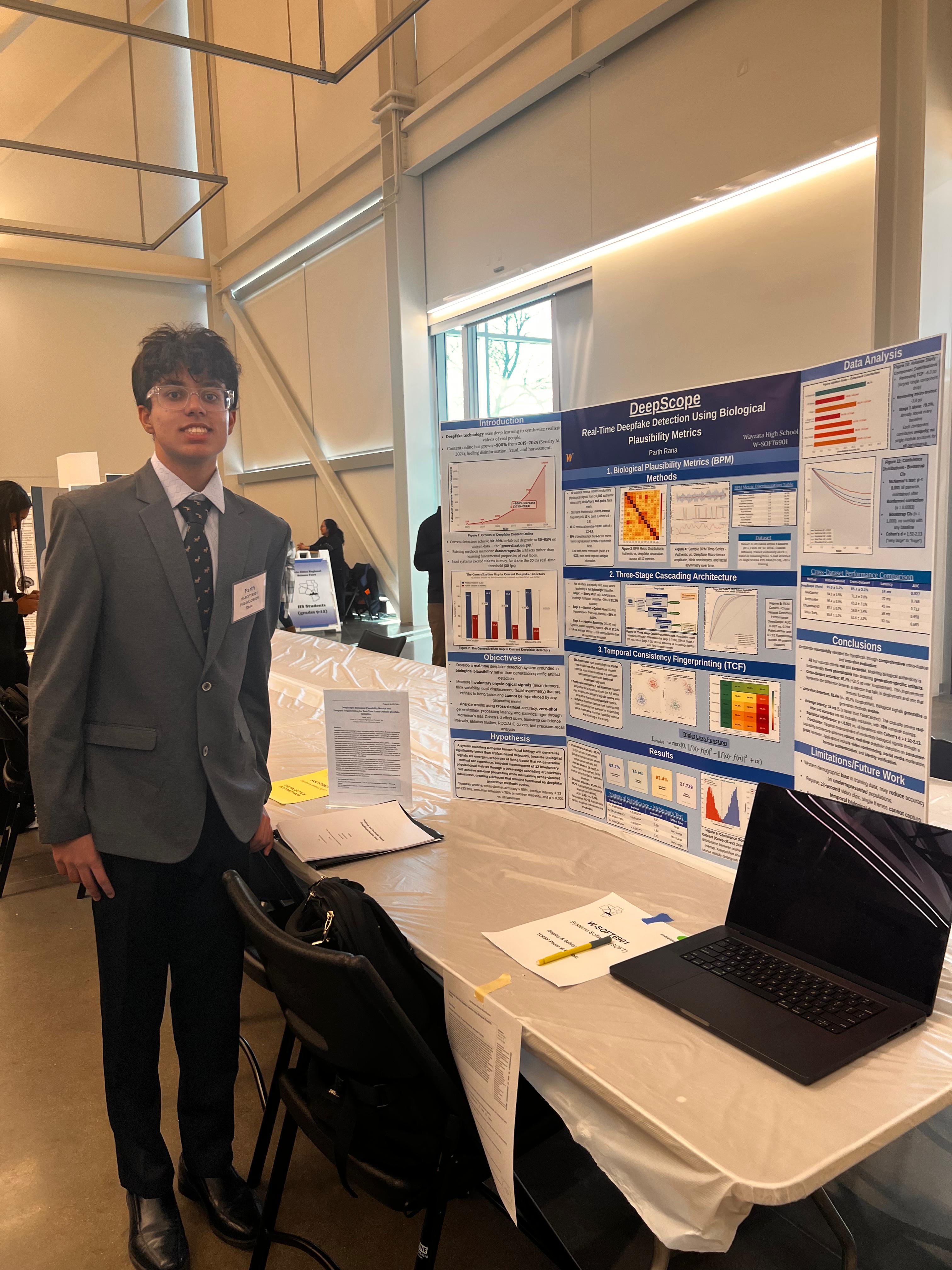

Wayzata High School · Parth Rana · Project ID: W-SOFT6901 · Twin Cities Regional Science Fair 2026

TCRSF 2026 — Twin Cities Regional Science Fair

Parth Rana presenting DeepScope · Project W-SOFT6901 · Wayzata High School

Recognition

Competition achievements

Upcoming — May 9–15, 2026 — Phoenix, Arizona

Regeneron ISEF 2026 — Accepted Upcoming

Accepted to the Regeneron International Science and Engineering Fair — the world’s largest high school science competition, organized by the Society for Science. Finalists compete across 22 scientific categories for nearly $9 million in awards, prizes, and scholarships, with more than 45 professional organizations awarding Special Awards. The 2026 fair is held at the Phoenix Convention Center, Phoenix, AZ.

Upcoming — 2026 — Saint Paul, Minnesota

Minnesota State Science & Engineering Fair — Advancing Upcoming

Qualified from TCRSF to advance to the Minnesota State Science & Engineering Fair, organized by the Minnesota Academy of Science. Hundreds of middle and high school students from across Minnesota present original research to STEM professionals and compete for prestigious awards. Top students from the state fair are selected to compete at ISEF.

February 2026 — Twin Cities, Minnesota — Completed

TCRSF 2026 — Competed & Advanced ✓ Done

Presented DeepScope at the Twin Cities Regional Science Fair (TCRSF), serving students across 9 Minnesota counties including Hennepin, Ramsey, Dakota, Anoka, and Washington. TCRSF is the regional gateway for outstanding projects to advance to the Minnesota State Science & Engineering Fair and, for high school winners, directly to ISEF. Project ID: W-SOFT6901, Wayzata High School.

ISEF 2026

World’s largest high school science fair · Phoenix, AZ · May 9–15, 2026 · 22 categories · ~$9M in awards

MN State Fair

Minnesota Academy of Science · Saint Paul, MN · Hundreds of student researchers · Judged by STEM professionals

TCRSF 2026

Twin Cities Regional Science Fair · 9 MN counties · Regional gateway to State Fair & ISEF · W-SOFT6901

The Problem

Why deepfake detection is hard

Deepfake video content grew ~900% from 2019–2024, fueling disinformation, fraud, and harassment. Yet current state-of-the-art detectors achieve 90–99% accuracy in-lab but collapse to 50–65% on unseen data — the “generalization gap.”

Existing methods memorize dataset-specific compression artifacts rather than learning fundamental biological properties of authentic faces. Most systems also exceed 100 ms latency, far above the 33 ms real-time threshold (30 fps). DeepScope addresses both problems simultaneously.

Hypothesis

Biological plausibility as ground truth

A system modeling authentic human facial biology will generalize significantly better than artifact-based detectors, because biological signals are emergent properties of living tissue that no generation method can reproduce. Targeted measurement of 12 involuntary physiological metrics via a three-stage cascading architecture will achieve real-time processing while maintaining cross-dataset robustness as deepfake generation methods evolve.

Methods — Part 1

Biological Plausibility Metrics (BPM)

12 statistical metrics were derived from 10,000 authentic videos using a 468-point MediaPipe face mesh, capturing involuntary physiological signals that living tissue produces but generative models cannot replicate.

Top Discriminator

Strongest single signal — Cohen's d = 2.8. Present in 95% of authentic faces; absent in 89% of deepfakes. No generative model currently reproduces this band.

Statistical Significance

All 12 metrics: p < 0.001 with Cohen's d ranging 1.2 – 2.8 (“large” to “huge” effect). Low inter-metric correlation (mean r = 0.33) confirms each metric captures unique information.

Methods — Part 2

Three-Stage Cascading Architecture

Binary Neural Network — Fast Classifier

Lightweight 1.2M-parameter network with knowledge distillation. Classifies easy cases (≈70% of videos) at 91.2% accuracy. Designed for maximum throughput on consumer hardware.

7 ms · 70% of videosWavelet + Optical Flow Analysis

Daubechies-4 wavelet decomposition combined with PWC-Net optical flow. Handles ambiguous cases (≈25%) that the fast classifier defers. Achieves 93.8% accuracy on its subset.

15 ms · 25% of videosAdaptive Ensemble — Hardest Cases

Dynamic model weighting applied to the most challenging ≈5% of videos (diffusion-generated). Ensemble achieves 97.1% accuracy on this subset. Only stage that exceeds the 33 ms threshold (20–30 ms), applied sparingly.

20–30 ms · 5% of videosOverall system performance

14 ms average latency — the only method tested below the 33 ms real-time threshold, with 78% compute savings via cascade routing. System runs on a consumer RTX 3060 12 GB GPU.

Methods — Part 3

Temporal Consistency Fingerprinting (TCF)

Each video is mapped to a 256-dimensional embedding via triplet loss, capturing its temporal consistency signature. Temporal convolutions + self-attention model how biological signals evolve over time across the full clip, rather than analyzing isolated frames.

Deepfake embeddings cluster separately from authentic in the embedding space with 78% example exclusivity, confirming zero-shot capability on unseen generation methods without any retraining or fine-tuning.

Results

Cross-dataset performance comparison

| Method | Within-Dataset | Cross-Dataset | Latency | AUC |

|---|---|---|---|---|

| DeepScope (Ours) | 85.3 ± 1.2% | 85.7 ± 2.1% | 14 ms | 0.927 |

| FakeCatcher | 91.2 ± 0.8% | 71.3 ± 1.5% | 72 ms | 0.768 |

| XceptionNet-V2 | 96.4 ± 0.9% | 65.2 ± 3.1% | 6 ms | 0.712 |

| EfficientNet-V2 | 97.1 ± 0.8% | 59.8 ± 3.4% | 38 ms | 0.698 |

| Vision Transformer | 95.8 ± 1.1% | 62.4 ± 3.2% | 52 ms | 0.683 |

Dataset: 27,129 videos across 4 datasets (FF++, Celeb-DF-v2, DFDC, Cosmos). Trained exclusively on FF++, tested on remaining three via 5-fold stratified CV. Single NVIDIA RTX 3060 12 GB, 18 hr training.

Conclusions

Key findings

Best cross-dataset generalization

Cross-dataset accuracy of 85.7% (+20.5 pp over XceptionNet) represents a detector that remains functional in deployment, unlike in-lab-only systems. All four success criteria met and exceeded.

Only real-time method tested

Average latency of 14 ms (5.1× faster than FakeCatcher) is the only method below the 33 ms real-time threshold — enabling live video conferencing, streaming, and social media moderation use cases.

Zero-shot detection on diffusion models

82.4% zero-shot accuracy on diffusion-generated content vs. 48.2% for XceptionNet. Biological signals generalize as generation methods evolve — without any retraining.

Statistical rigor: p < 0.001, Cohen's d = 1.52–2.13

All comparisons: p < 0.001 (McNemar's test, Bonferroni-corrected). Bootstrap CIs (n = 1,000) show no overlap with baselines. Effect sizes 1.52–2.13 = “Very Large” to “Huge.”

Limitations & Future Work

What comes next

Current limitations

- ●Western demographic bias in training data; may reduce accuracy on underrepresented populations

- ●Requires ≥2-second video clips; single frames cannot capture temporal biological dynamics

- ●Small diffusion dataset (500 videos) and single-GPU training environment (RTX 3060)

Future directions

- ●Diverse demographics, audio-visual multimodal integration

- ●Continual learning to adapt to emerging generation methods

- ●Edge / smartphone deployment for broader accessibility